2016), relative entropy does not appear to have been considered in the conventional satellite remote sensing literature as an alternative or supplement to SIC. Those authors recommended the use of an alternative metric known as the Kullback–Leibler divergence ( Kullback and Leibler 1951) or, more commonly in the atmospheric literature, relative entropy. 2002) and data assimilation ( Xu 2006 Xu et al. Questions about the meaningfulness of SIC as a primary information metric for quantitative estimation appear to have been first raised not in the satellite retrieval community that most heavily employs it but rather in the contexts of climate data analysis ( Majda et al. Beginning in 2008, a few investigators relaxed the Gaussian assumption and applied Shannon information theory to discretized prior and posterior PDFs ( Posselt et al. 2013 Gambacorta and Barnet 2013 Turner and Löhnert 2014 Xu and Wang 2015 Wang et al. 2008 King and Vaughan 2012 Ottaviani et al. 2006 Di Michele and Bauer 2006 Lebsock et al.

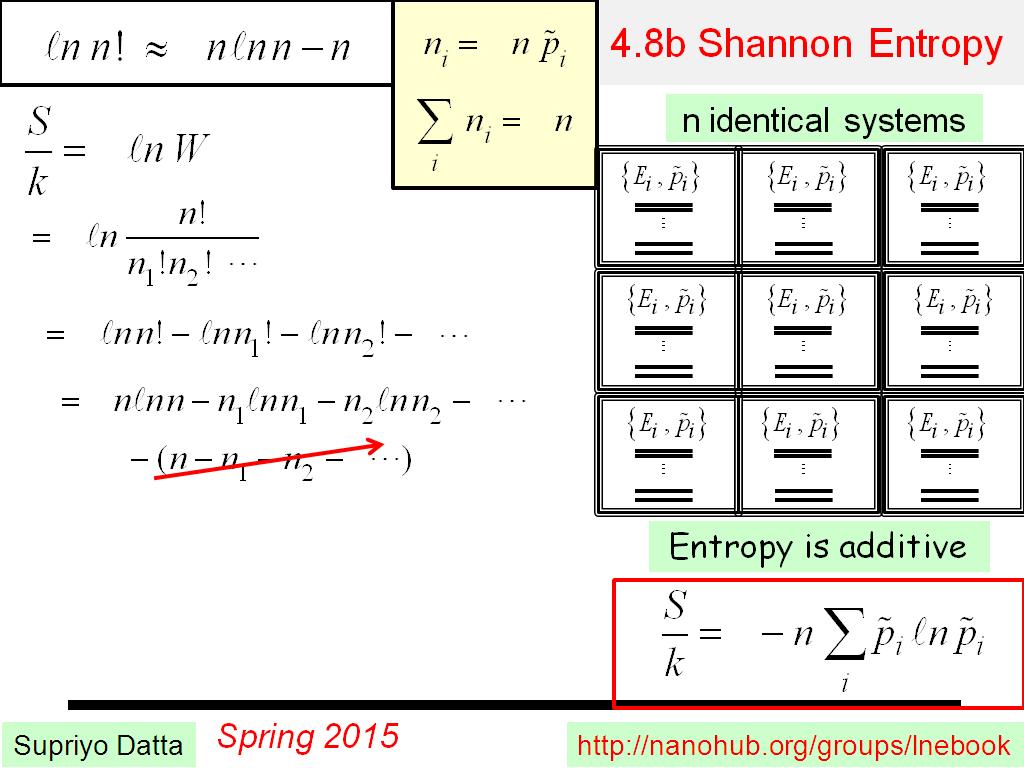

The textbook of Rodgers (2000) further solidified the acceptance of SIC as a formal basis for quantitatively describing the value of added measurements, and a number of authors have adopted that formalism, primarily under the assumption of Gaussian error statistics ( Sofieva and Kyrölä 2003 Engelen and Stephens 2004 L’Ecuyer et al. The so-called Shannon information content (SIC) of an indirect measurement is defined by the resulting reduction in the Shannon entropy of the probability density function (PDF) of the variable of interest.Īpplications of Shannon information theory to sensor channel selection and related problems continued with Eyre (1990), Huang and Purser (1996), Rodgers (1996), and Rabier et al. Peckham (1974), citing Wiener (1948) and Feinstein (1958), was apparently the first to invoke Shannon information theory ( Shannon 1948) in this context for the optimization of satellite infrared instruments for atmospheric temperature retrievals. In the design and evaluation of satellite sensors and/or the associated retrieval algorithms, there is often a need to objectively quantify the improvement in one’s knowledge of x as a consequence of the information provided by y, either in comparison to climatological knowledge or as the result of adding channels to a sensor and/or retrieval algorithm. The former variable may itself be either a scalar variable (e.g., surface temperature or precipitation rate) or a vector (e.g., temperature or humidity profile). The remote sensing of atmospheric or geophysical variables generally entails the indirect inference of a variable of interest x from a direct measurement of y, where the latter variable is commonly a radiance or a vector of radiances at specific wavelengths. Thus, neither information metric can necessarily be counted on to respond in a predictable way to changes in the precision or quality of a retrieved quantity. Yet, even KL divergence is blind to the expected magnitude of errors as typically measured by the error variance or root-mean-square error. Following previous authors’ writing mainly in the data assimilation and climate analysis literature, the Kullback–Leibler (KL) divergence, also commonly known as relative entropy, is shown to suffer from fewer obvious defects in this particular context. The potentially severe shortcomings of SIC are illustrated with simple experiments that reveal, for example, that a measurement can be judged to provide negative information even in cases in which the postretrieval PDF is undeniably improved over an informed prior based on climatology. It is not widely appreciated, however, that Shannon information content (SIC) can be misleading in retrieval problems involving nonlinear mappings between direct observations and retrieved variables and/or non-Gaussian prior and posterior PDFs. Shannon entropy has long been accepted as a primary basis for assessing the information content of sensor channels used for the remote sensing of atmospheric variables.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed